In the decades that followed these tools, however, there was a shift away from the idea of “conversing with computers.” Khudanpur said that’s “because it turned out the problem is very, very hard.” Instead, the focus turned to “goal-oriented dialogue,” he said. (The New York Times’ 2001 obituary for Colby included a colorful chat that ensued when researchers brought Eliza and Parry together.) It could only convincingly mimic a text conversation for about a dozen back-and-forths before “you realize, no, it’s not really smart, it’s just trying to prolong the conversation one way or the other,” said Khudanpur, an expert in the application of information-theoretic methods to human language technologies and professor at Johns Hopkins University.Īnother early chatbot was developed by psychiatrist Kenneth Colby at Stanford in 1971 and named “Parry” because it was meant to imitate a paranoid schizophrenic. For all its historic importance in the tech industry, he said it didn’t take long to see its limitations. Khudanpur remembers chatting with Eliza while in graduate school. Still, as these services expand into more corners of our lives, and as companies take steps to personalize these tools more, our relationships with them may only grow more complicated, too. They don’t really understand what they’re talking about.” “They’re mimics, these systems, but they’re very superficial mimics. “These technologies are really good at faking out humans and sounding human-like, but they’re not deep,” said Gary Marcus, an AI researcher and New York University professor emeritus. Others, meanwhile, warn the technology behind AI-powered chatbots remains much more limited than some people wish it may be. At least one startup has gone so far as to use it as a tool to seemingly keep dead relatives alive by creating computer-generated versions of them based on uploaded chats. Some also make a case for these chatbots providing comfort to the lonely, elderly, or isolated. This tech underpins the digital assistants so many of us have come to use on a daily basis for playing music, ordering deliveries, or fact-checking homework assignments.

It didn’t take long, for example, for Meta’s new chatbot to stir up some controversy this month by spouting wildly untrue political commentary and antisemitic remarks in conversations with users.Įven so, proponents of this technology argue it can streamline customer service jobs and increase efficiency across a much wider range of industries. It reacted to key words and then essentially punted the dialogue back to the user.Ĭontemporary chatbots can also elicit strong emotional reactions from users when they don’t work as expected - or when they’ve become so good at imitating the flawed human speech they were trained on that they begin spewing racist and incendiary comments.

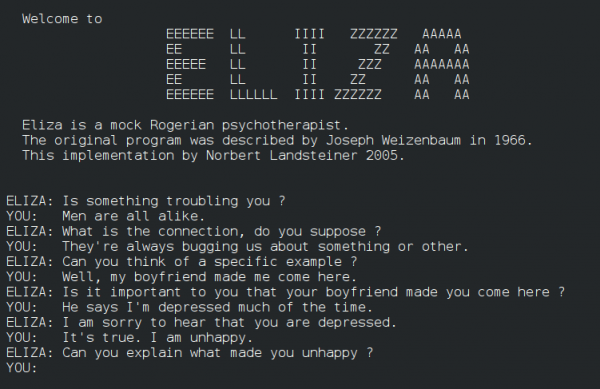

“A certain danger lurks there.” He spent the ends of his career warning against giving machines too much responsibility and became a harsh, philosophical critic of AI.Įliza, widely characterized as the first chatbot, wasn’t as versatile as similar services today. “ELIZA shows, if nothing else, how easy it is to create and maintain the illusion of understanding, hence perhaps of judgment deserving of credibility,” Weizenbaum wrote in 1966. Those interacting with Eliza were willing to open their hearts to it, even knowing it was a computer program. To Weizenbaum, that fact was cause for concern, according to his 2008 MIT obituary. Nonetheless, as Joseph Weizenbaum, the computer scientist at MIT who created Eliza, wrote in a research paper in 1966, “some subjects have been very hard to convince that ELIZA (with its present script) is not human.” The program, which relied on natural language understanding, reacted to key words and then essentially punted the dialogue back to the user. Eliza, which is widely characterized as the first chatbot, wasn’t as versatile as similar services today.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed